Each AI worker runs under a registered workload credential issued by the Aegis IDP.

Runtime control for regulated AI workers

Control what AI workers can do in production.

Aegis gives regulated teams workload identity, runtime policy enforcement, approval routing, and tamper-evident audit for AI workflows that touch sensitive data and consequential actions.

30-minute working session for teams evaluating runtime controls around a real AI workflow.

Allowed sources, tools, and capabilities are declared per workflow and enforced at the gateway.

Cedar policies evaluate every model and tool call inline, default-deny.

Consequential actions pause and route to the right reviewer with policy and context attached.

Every decision lands in an HMAC-chained record you can replay end to end.

The buyer problem

AI workers are reaching production before the control model is ready.

Regulated teams want AI to handle more than drafting and search. The valuable workflows read customer context, call internal tools, recommend decisions, and sometimes trigger operational changes.

That creates a control gap. Existing release review can approve code, but it cannot answer what an AI worker did at runtime, which data it touched, which policy allowed or blocked it, who approved a consequential action, and how the decision can be replayed later.

Inside the product

What enforcement looks like at runtime.

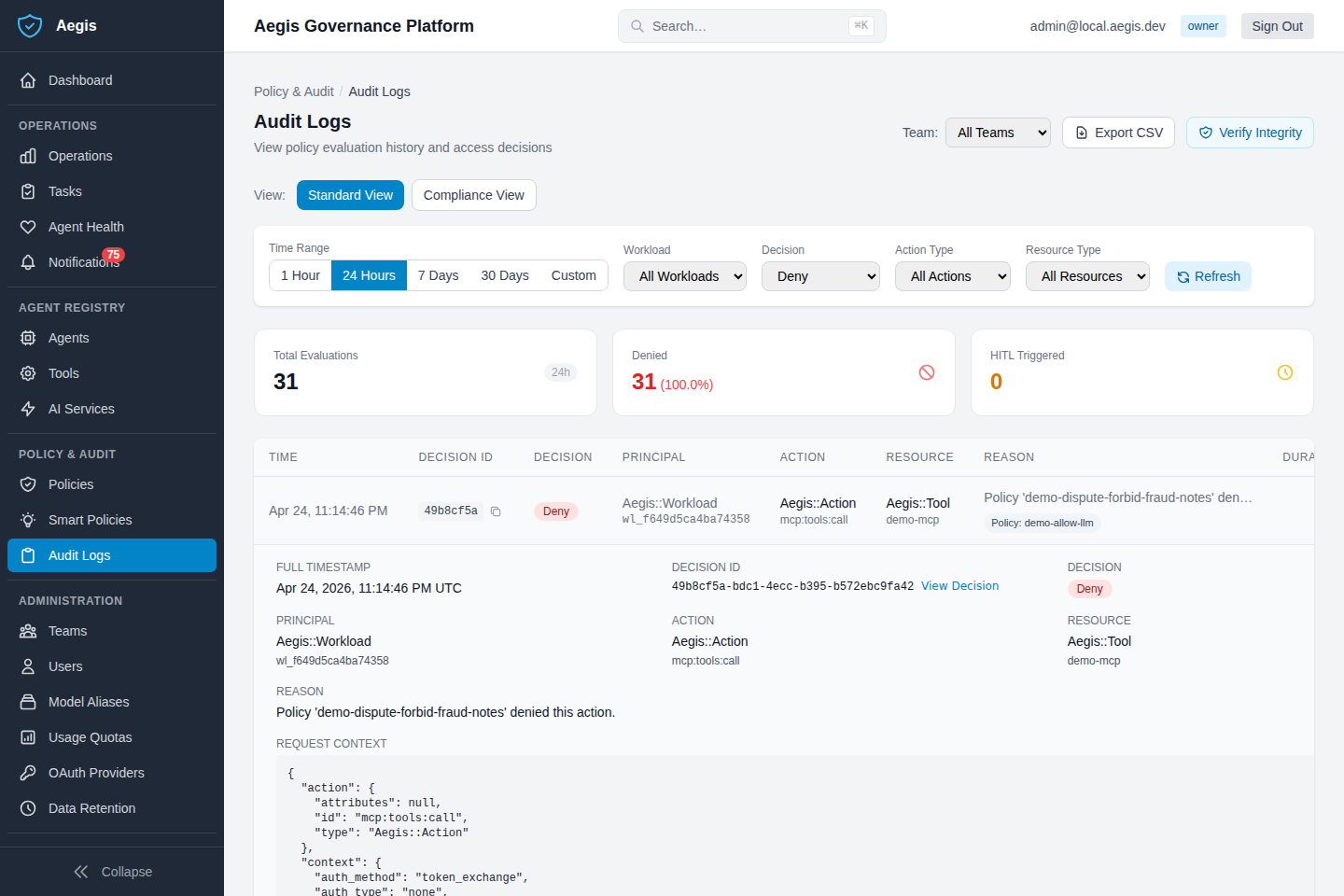

The audit log captures every policy decision — allow, deny, approval — with workload identity and full request context attached. Every record is HMAC-chained for tamper-evidence.

Every AI workflow has control points

A governed workflow, end to end.

Here is what those control points look like in practice — illustrated with a real dispute-remediation flow run against a live Aegis instance. DisputeBot-v1 starts with a workload identity, gets the context it needs, gets denied access to records it should not see, pauses on a credit action that needs a human, and lands every step in a tamper-evident audit chain. This is the current Aegis proof flow, not an aspirational diagram.

Worker starts with a scoped workload identity

The Aegis IDP issues a workload JWT for DisputeBot-v1. Owner, scope, and the dispute workflow are bound to the credential before any tool call.

Customer and transaction context are allowed

Case lookup, customer history, and transaction details pass the Cedar policy check for this workflow and reach the worker.

Fraud investigation notes are denied at the gateway

The deny policy demo-dispute-forbid-fraud-notes blocks the read. The denied attempt is captured with full request context.

Credit action pauses for human approval

The proposed $254.97 credit crosses a threshold. Aegis pauses execution and routes the action to a card-ops operator with policy and context attached.

The decision trail is replayable

Identity, allowed reads, denied reads, approval decision, approver role, and final outcome are preserved in the HMAC-chained audit log for later inspection.

What teams can evaluate

Concrete control surfaces for a pilot conversation.

Aegis sits as a control layer in front of model and tool access. Adopting it for a workflow involves workload registration, gateway routing, Cedar policy authoring, and reviewer setup for that workflow — not a drop-in install. The surfaces below are what a pilot evaluates around.

Self-hostable control plane

The control surface is designed to run inside the customer environment so sensitive workflow data stays in boundary.

Gateway enforcement

Place policy in front of model and tool access without rebuilding every application workflow that needs it.

Inspectable Cedar policies

Use explicit, declarative rules that product, platform, risk, and compliance stakeholders can read together.

Human-in-the-loop routing

Send actions to the right reviewer when policy thresholds, data sensitivity, or business risk require it.

Tamper-evident audit log

Keep an HMAC-chained record of identity, access, policy decisions, approvals, denials, and outcomes.

Workflow-first adoption

Adopt the controls around one AI worker first. Evaluate the model, then decide where the same pattern should apply next.

Where Aegis is today

Start with one governed workflow.

The first proof point is governed dispute remediation: a real AI worker, a real Cedar deny policy on sensitive context, real human approval routing on a credit action, and a real HMAC-chained audit log across the full sequence. Concrete enough to evaluate against your own workflow controls.

- Workload identity issued by the Aegis IDP

- Default-deny Cedar policy on sensitive records, exercised end to end

- Operator approval routing for credit and other write actions

- Replayable audit trail across allowed, denied, approved, and completed steps

Where it extends

Same control pattern, more regulated workflows.

The same primitives — identity, policy, approval, audit — are designed to apply across additional regulated AI worker workflows. Pilot one, and the same controls become reusable for the next, instead of a new bespoke review process each time.

Aegis is being evaluated through structured pilot conversations with regulated product, platform, and control teams. Treat the public site as an honest read of what is shipped today, not a claim of current customer deployments.

Design partner fit

For teams with a real AI workflow and a real approval problem.

The best pilot conversations are with regulated product, platform, operations, or control teams that already know which AI worker they want to deploy, but need a stronger answer for policy enforcement, human review, and audit before it reaches production.

- You have a sensitive workflow where AI can assist but should not act unchecked.

- You need runtime proof of what the worker accessed, attempted, and completed.

- You want to evaluate controls around a concrete pilot, not a generic AI policy deck.

Built by someone who has lived the problem

Dorin Ciobanu

Founder & CEO, Aegis

Previously at JPMorgan Chase, working in software engineering and security controls inside regulated banking systems. I have seen how compliance review operates at scale — and exactly how it breaks down when AI workers start crossing data boundaries and triggering operational changes.

Aegis is the platform I would have wanted: runtime identity, policy, and audit that gives product teams a deployment path and gives control functions a defensible answer.

Stay in the loop

Not ready for a pilot conversation?

Leave your work email. We will reach out when Aegis is relevant to your AI deployment timeline — no sales sequence, just relevant updates.

Pilot conversation

Scope the workflow you want to control.

Bring the workflow, the systems it touches, the actions that require approval, and the audit questions your team has to answer. Aegis can be evaluated around that concrete operating problem in a focused pilot conversation.